How to Find Large Files in an AWS S3 Bucket Using Command Line Interface

Using one simple command

Software Engineer, Content Creator, and Open-Source contributor

I recently struggled to find the right command to search for large files in an AWS S3 bucket and spent many hours researching this topic. I am new to Amazon CloudShell, so I had to ask the community for help, and I even created an account on AWS re:Post (aka the StackOverflow alternative for Amazon Web Services). Although I recommend using it if you need help with AWS, due to the small number of users on re:Post you can usually get help faster on StackOverflow. In my opinion, if you have a question, it is better to ask on both platforms.

Finding Files using AWS CloudShell

For this example, I have chosen Amazon CloudShell as the easiest tool to use with the AWS CLI that does not require any software to be installed on your computer. You can use any other tool you like.

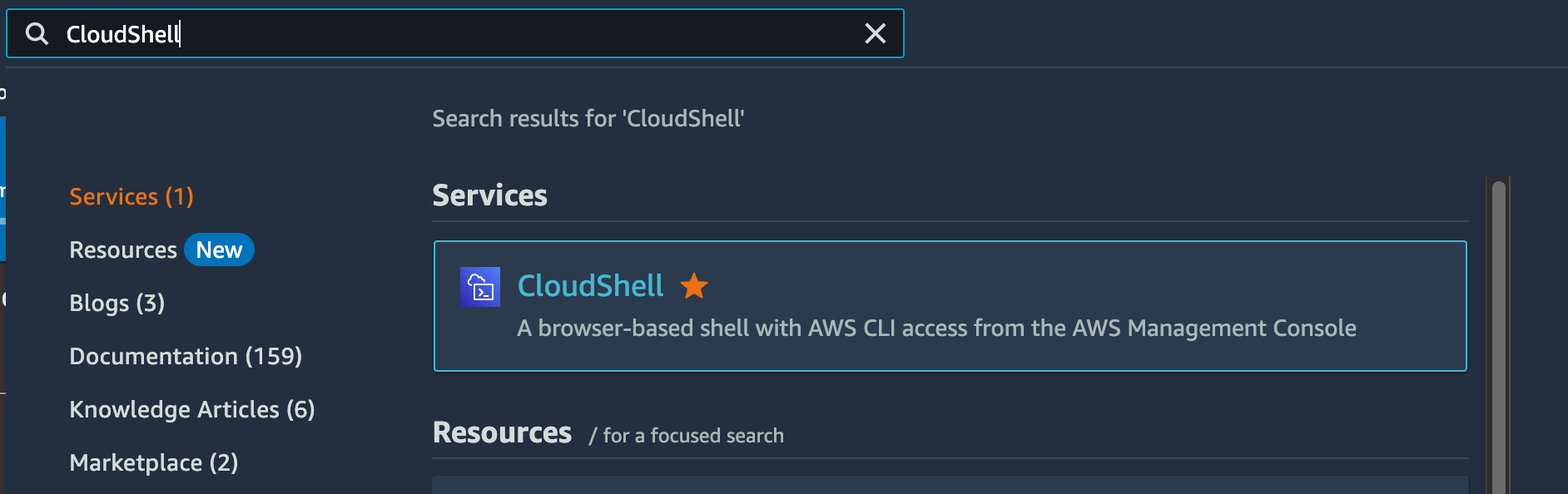

To find the N most recent largest files in an AWS S3 bucket, you need to open console.aws.amazon.com and find CloudShell (I recommend adding it to your favourites in case you need to use it again).

Before running the command you need to know your S3 bucket name. When you have it ready, you can use the command below to find files:

aws s3api list-objects-v2 --bucket BUCKETNAME --query 'sort_by(Contents[?LastModified>=`2023-01-01`], &Size)[-5:]'

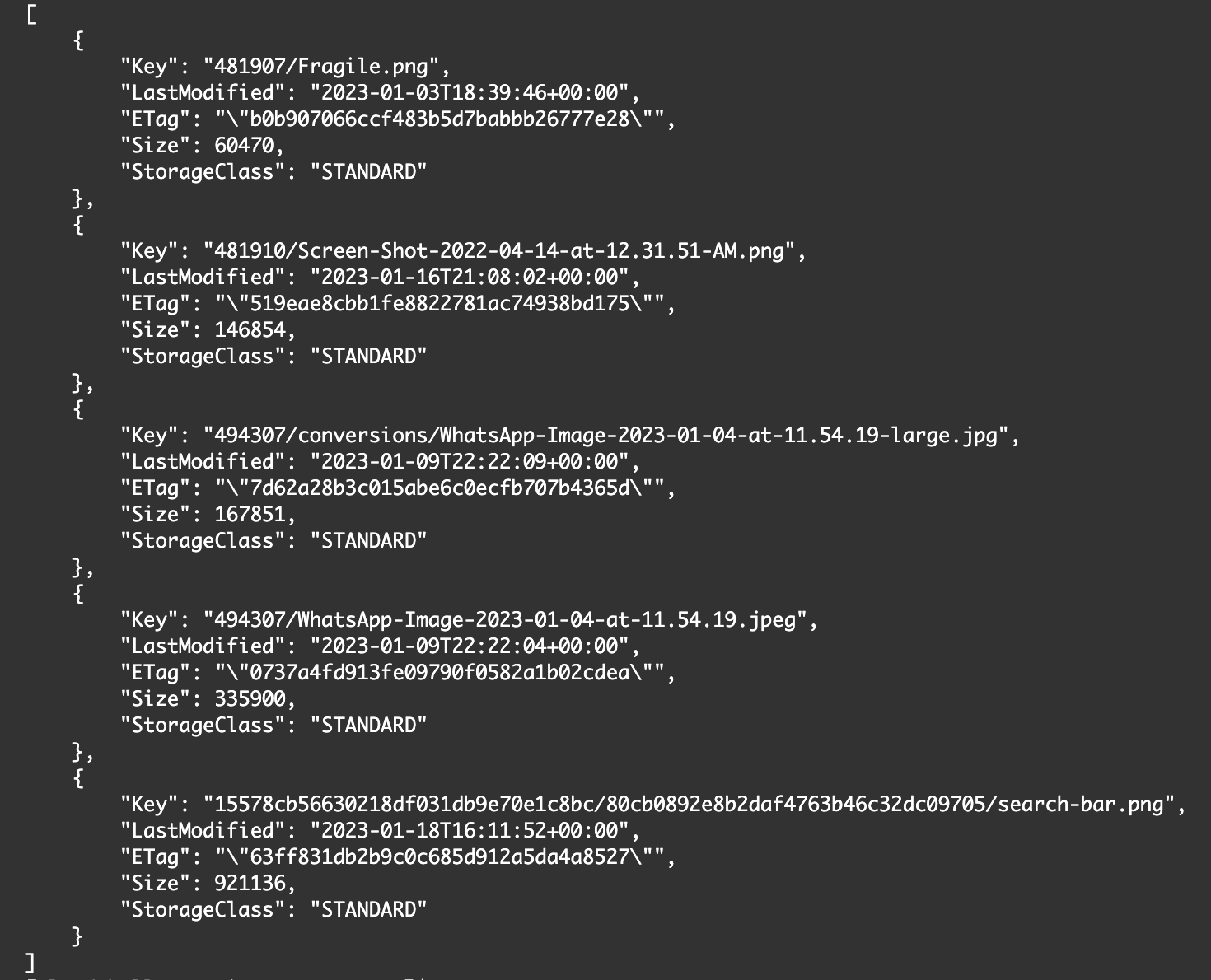

Replace BUCKETNAME with your S3 bucket name and change the date (2023-01-01) as needed. After running the command, your output should look like this:

Ignore LastModified Property

If you want to find the last N files regardless of the LastModified property, you can configure your command like this:

aws s3api list-objects-v2 --bucket BUCKETNAME --query 'sort_by(Contents, &Size)[-5:]'

Adjusting the Number of Files

You can adjust the number of files to be returned by changing the number in the square brackets ([-5:]), or you can remove the square brackets with the contents inside to display all files sorted by size. Be careful to run such a command on a large bucket though, as it can take minutes to perform the operation (I did it once...).

Bonus Command

There is another command that I rarely use but thought it would be useful to share.

aws s3 ls --summarize --human-readable --recursive s3://BUCKETNAME

This command will display all files in the bucket in a compact form with LastModified, Size and Name properties. If you have ever run the ls command on a Linux/Unix operating system before, you know what to expect.

The end. I hope you found this information helpful, stay tuned for more content! :)